Methodology

To address the key questions mentioned above, a qualitative survey was conducted in August 2025 using an online questionnaire, with 156 participants.

The demographic analysis included individuals born between 1995 and 2010, who, according to Simon Schnetzer’s (2024) definition, belong to Gen Z.

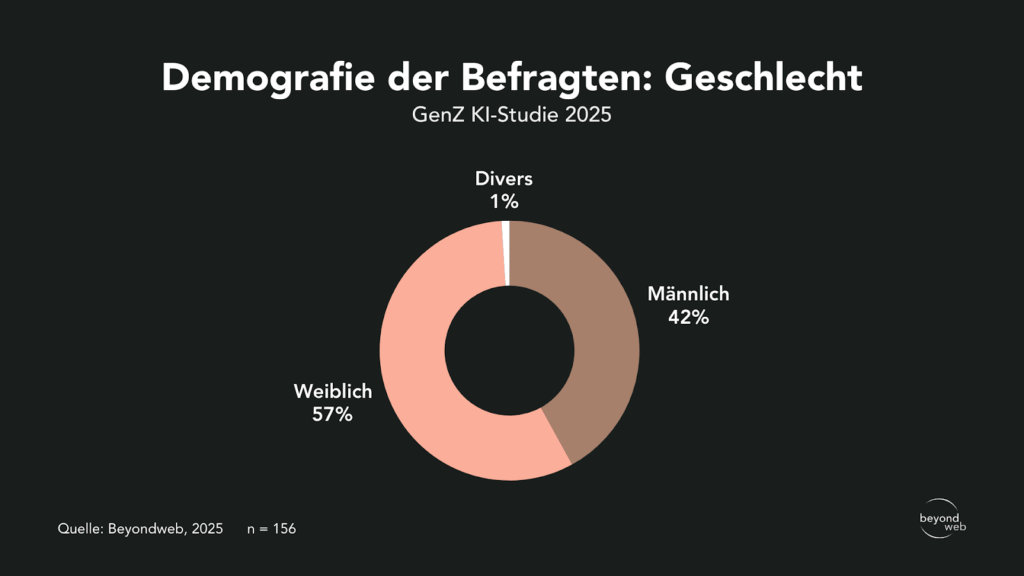

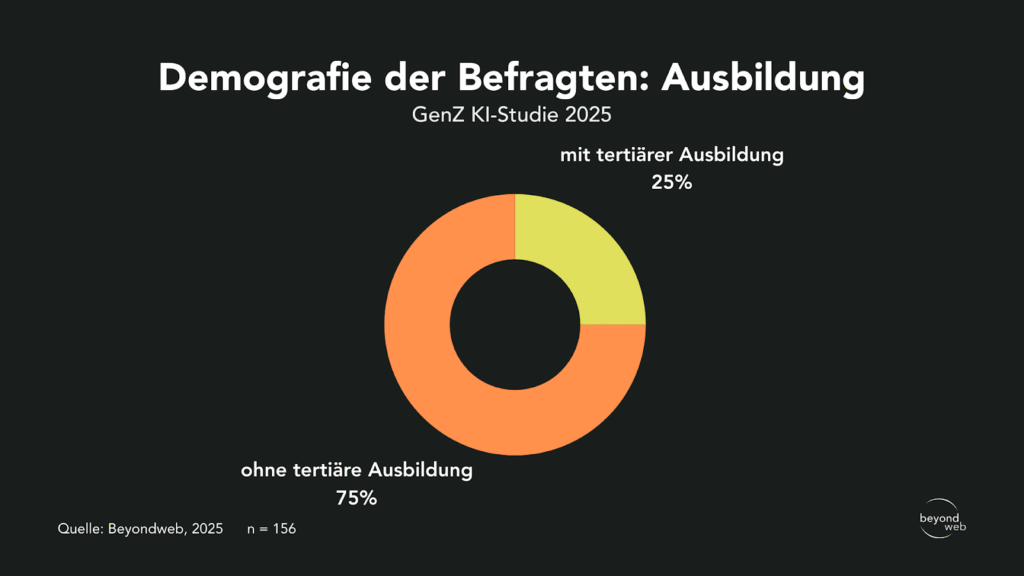

The respondents were segmented by educational level and gender.

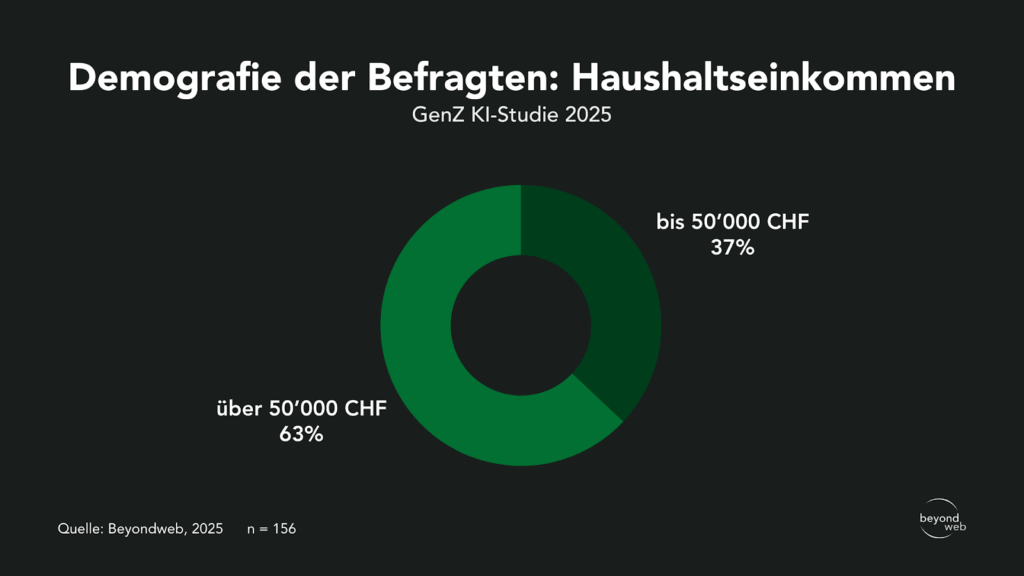

In addition, household income was surveyed.

The quality of the responses was ensured through a market research platform.

The basic population surveyed is made up as follows:

- Gender: 42% male, 57% female, 1% other

- Education: 25% with a college degree, 75% without a college degree

- Household income: 37% for incomes up to CHF 50,000, 63% for incomes over CHF 50,000

Results

The following section provides a detailed explanation of all the questions and the corresponding survey results.

An interpretation of the results is provided in the following section.

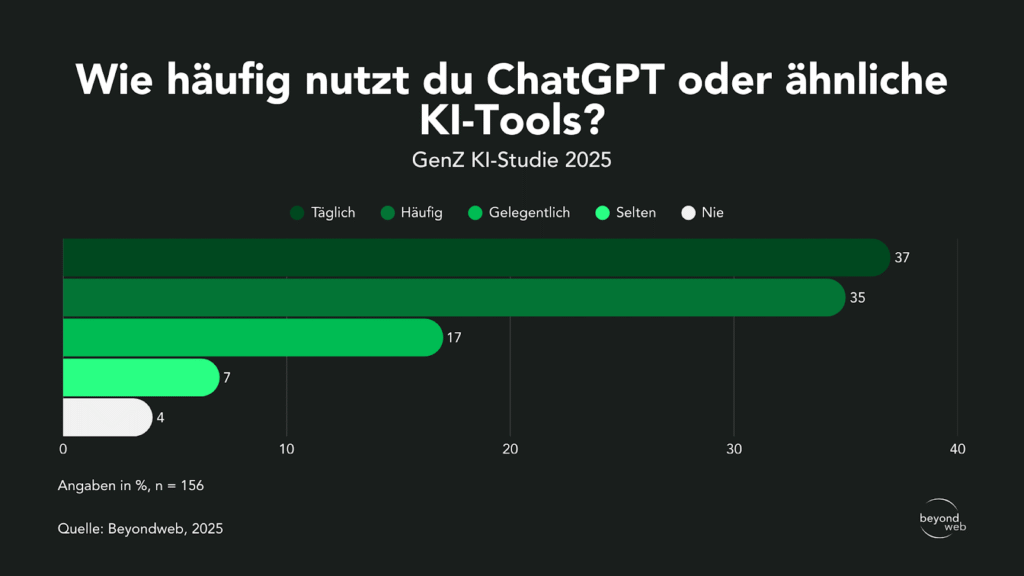

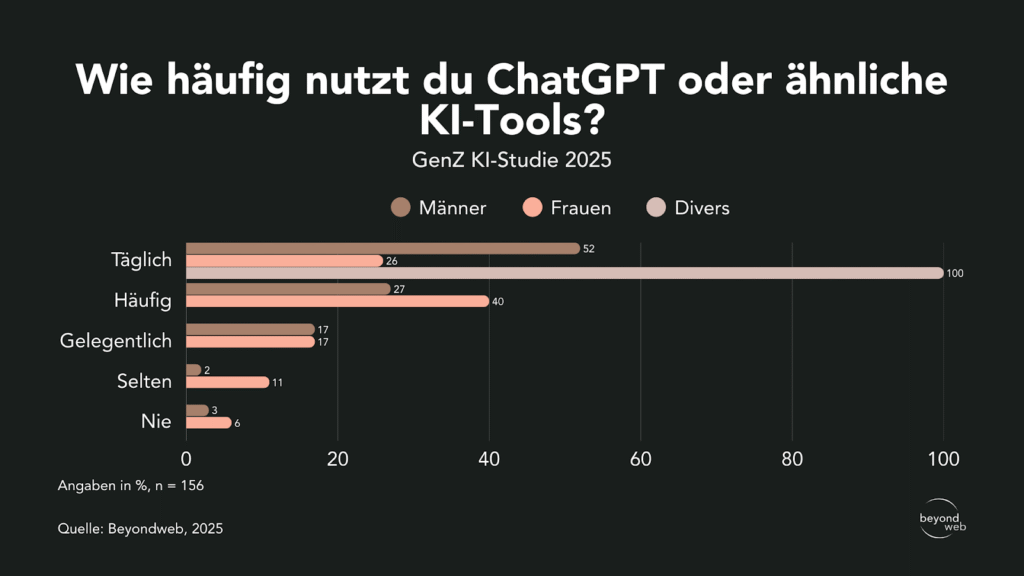

Frequency of ChatGPT use

According to the results of our study, 37% of respondents use ChatGPT daily, and 35% use the tool frequently.

17% use it occasionally, 7% use it rarely, and 4% said they never use it.

Responses from all respondents to the question “How often do you use ChatGPT or similar AI tools?”

When broken down by gender, 52% of men and 26% of women use ChatGPT every day.

Among the people who identified as non-binary and were surveyed, the figure is 100%.

40% of women and 27% of men use it regularly.

17% of men and 17% of women use it occasionally. 11% of women and 2% of men use it rarely.

3% of men and 6% of women have never used it.

Responses broken down by gender to the question “How often do you use ChatGPT or similar AI tools?”

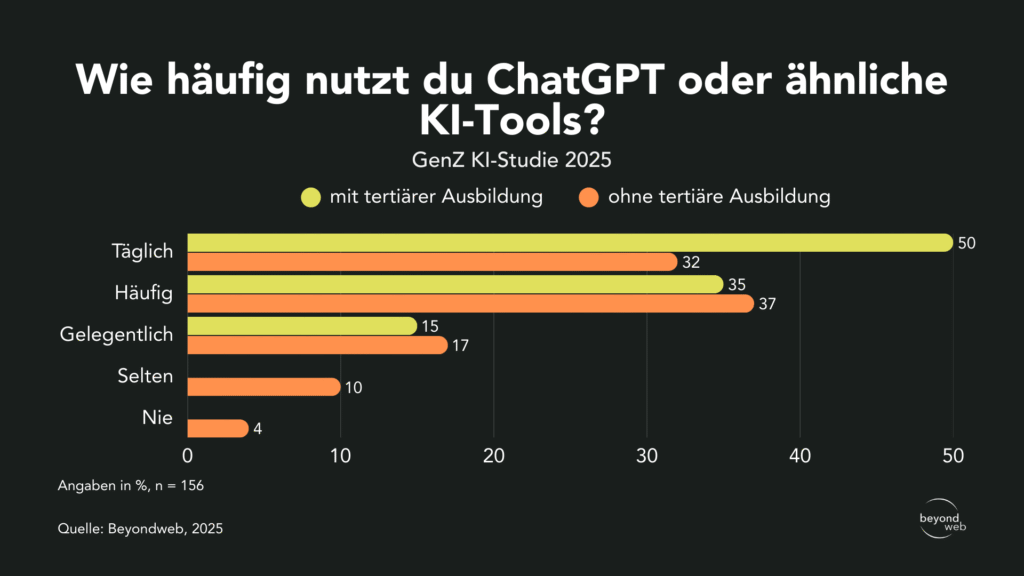

50% of college graduates say they use the tool every day, while the figure stands at 32% among those without a college degree.

35% of respondents with a university or college degree use ChatGPT frequently, compared to 37% of respondents without a college degree.

Occasionally, the tool is used by 15% of those with a tertiary education and by 17% of those without a tertiary education.

The tool is rarely used by the 10% of respondents without a college degree, while none of the respondents with a college degree use it only occasionally.

None of the respondents with a college degree have ever used ChatGPT, while 4% of respondents without a college degree say they have never used the tool.

Responses broken down by educational level to the question “How often do you use ChatGPT or similar AI tools?”

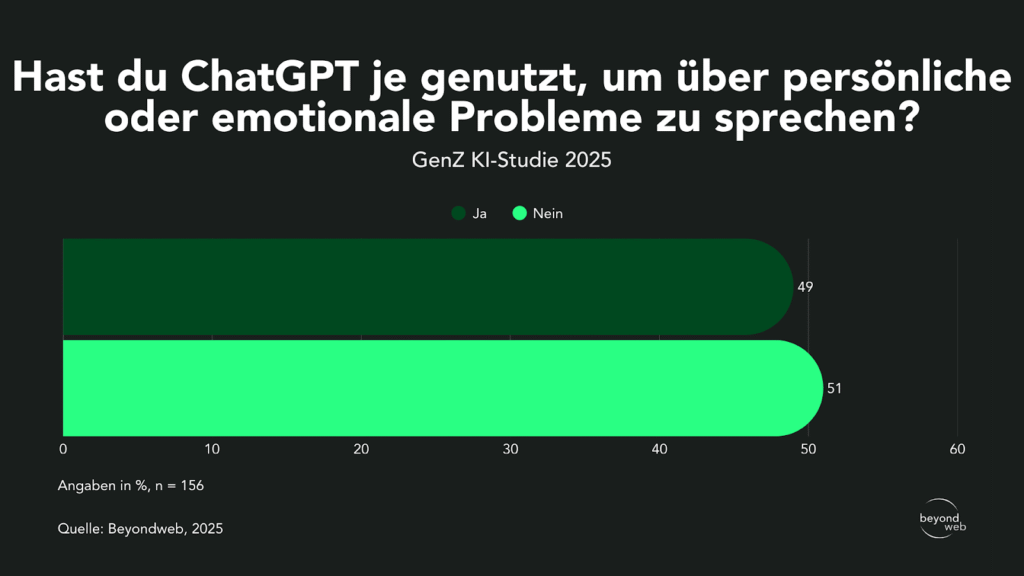

Use of ChatGPT in a mental health context

According to our survey results, 49% of respondents have already used ChatGPT to talk about personal or emotional issues.

51% said they had never done this before.

Responses from all respondents to the question “Have you ever used ChatGPT to talk about personal or emotional issues?”

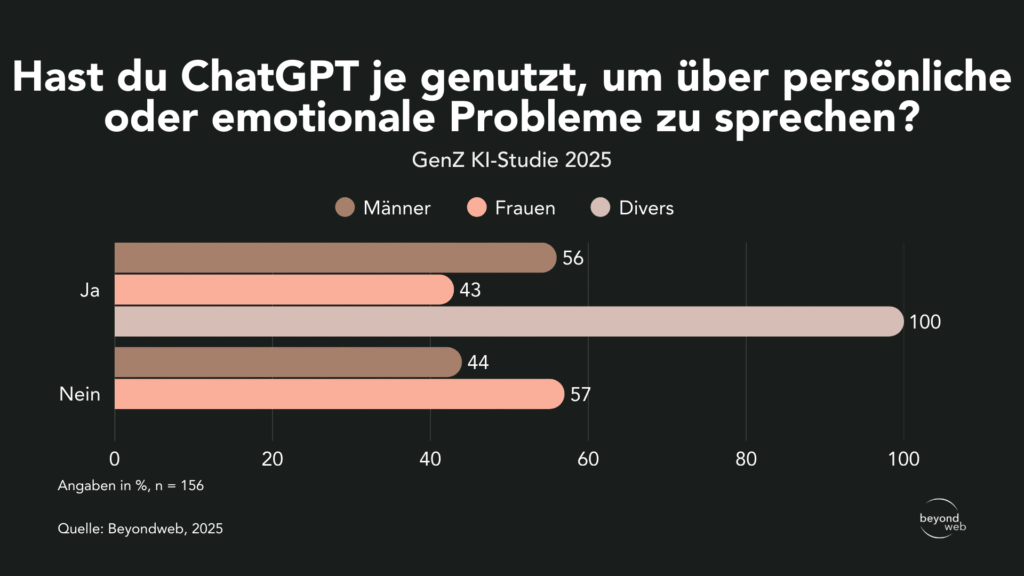

When broken down by gender, the data shows that 56% of men (blue) and 43% of women (green) have already used ChatGPT for personal or emotional matters.

In this context, 44% of men and 57% of women have not used ChatGPT.

None of the respondents identified as part of the diverse group reported that they had never used ChatGPT to discuss personal or emotional issues.

Responses broken down by gender to the question “Have you ever used ChatGPT to talk about personal or emotional problems?”

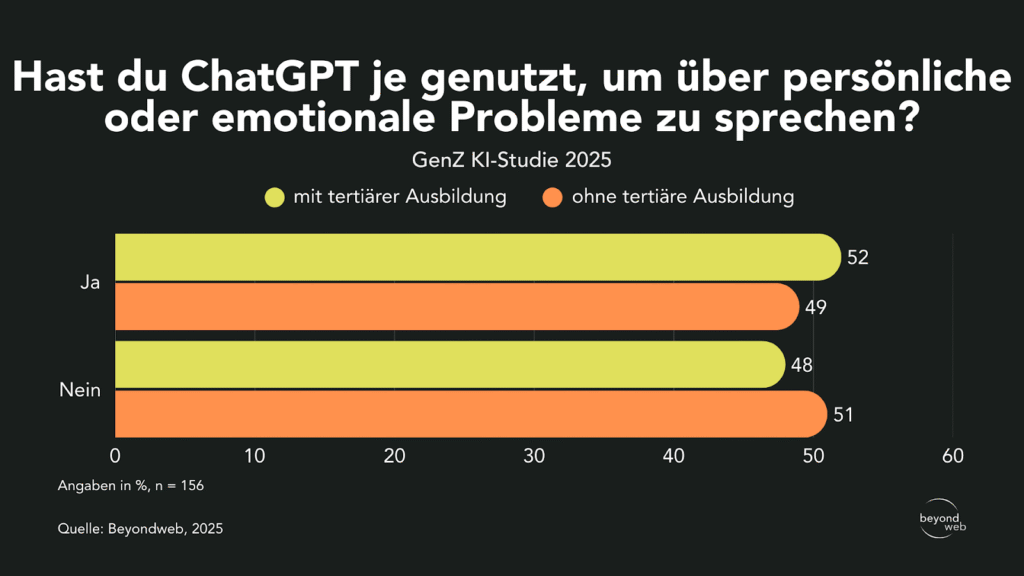

Broken down by educational background, 52% of those with a tertiary degree have already used ChatGPT for personal or emotional issues, compared to 49% of respondents without a tertiary degree.

Zu diesem Zweck nicht genutzt haben ChatGPT 48 % der Akademiker:innen und 51 % der Befragten ohne höheren Bildungsabschluss.

Responses broken down by educational level to the question “Have you ever used ChatGPT to talk about personal or emotional problems?”

Applications of ChatGPT in the field of mental health

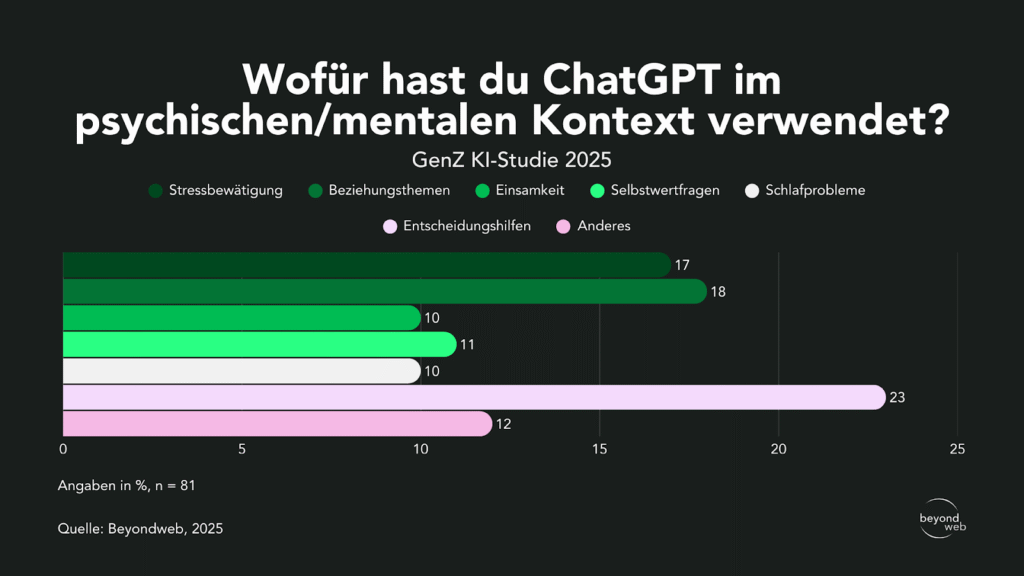

In a one-on-one consulting setting, respondents use ChatGPT for various purposes.

The most frequently cited reason was decision-making support (23%), followed by relationship issues (18%) and stress management (17%).

11% cited self-esteem issues, while 10% used ChatGPT for sleep problems or loneliness.

Under “Other,” 12% cited additional topics.

Responses from all respondents to the question “How have you used ChatGPT in a mental health context?”

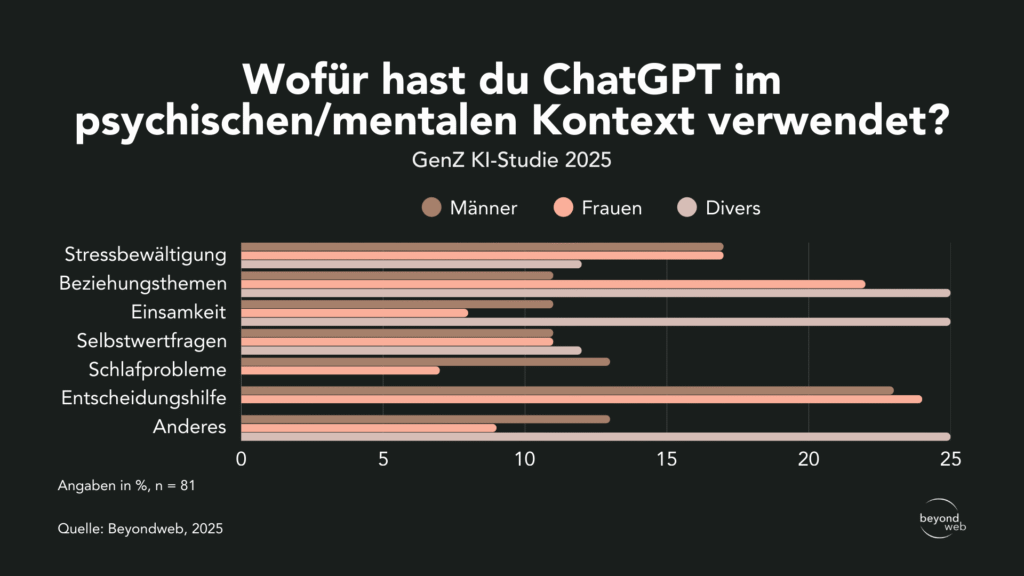

The picture varies by gender:

- Stress management: 17% of men, 17% of women, and 12% of people who identify as non-binary.

- Relationship issues: 11% of men, 22% of women, and 25% of people who identify as non-binary.

- Loneliness: 11% of men, 8% of women, and 25% of people who identify as non-binary.

- Self-confidence: 11% of men, 11% of women, and 12% of people who identify as diverse.

- Sleep problems: 13% of men, 7% of women, and 0% of people who identify as non-binary.

- Decision-making guide: 23% of men, 24% of women, and 0% of people who identify as non-binary.

- Other: 13% of men, 9% of women, and 25% of people who identify as non-binary.

Antworten, unterteilt nach dem Geschlecht, auf die Frage “Wofür hast du ChatGPT im psychischen/mentalen Kontext verwendet?”

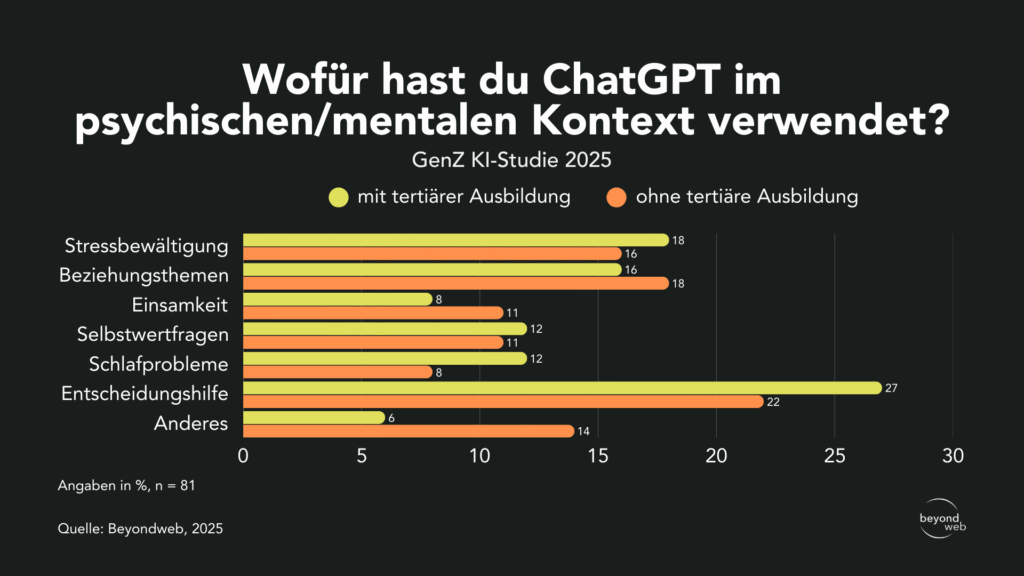

Differences are also evident when it comes to educational attainment:

- Stress management: 18% of respondents with a college degree and 16% without a college degree.

- Relationship issues: 16% of college graduates and 18% of those without a college degree.

- Loneliness: 8% of people with a college degree and 11% of those without a college degree.

- Self-confidence: 12% among those with a college education and 11% among those without a college education.

- Sleep problems: 12% of college graduates and 8% of those without a college degree.

- Decision-making guide: 27% with a college degree and 22% without.

- Others: 6% of college graduates and 14% of non-college graduates.

Responses broken down by educational level to the question “How have you used ChatGPT in a psychological/mental health context?”

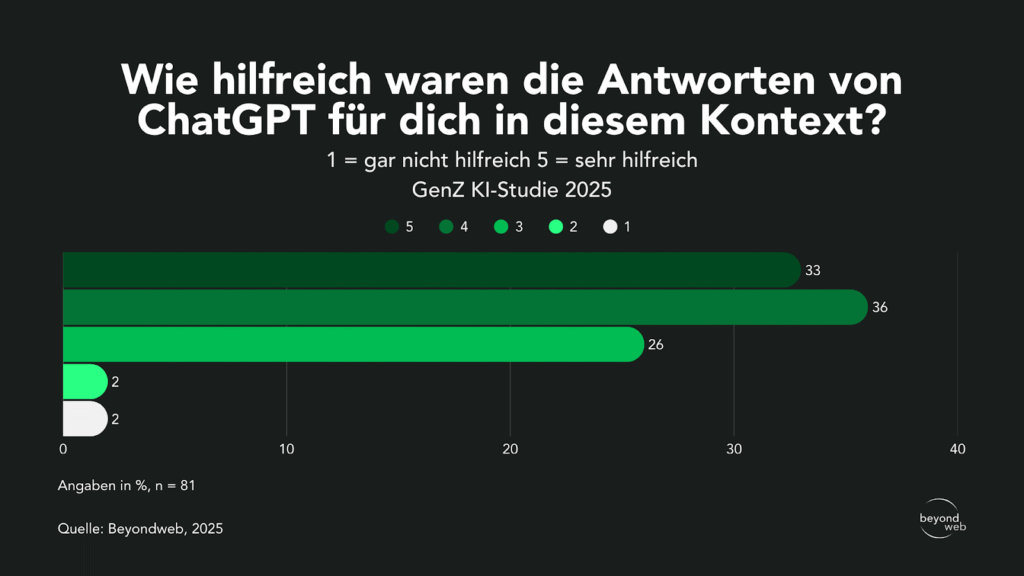

Assessment of the usefulness of ChatGPT responses

According to our study results, 36% of respondents rated ChatGPT’s responses as 4 = helpful, while 33% rated them as 5 = very helpful.

26% gave a rating of 3 = somewhat helpful. Only 2% rated the answers as 1 = not helpful at all, and another 2% as 2 = not very helpful.

Responses from all respondents to the question “How helpful were ChatGPT’s answers to you in this context? (1 = not at all helpful, 5 = very helpful)”

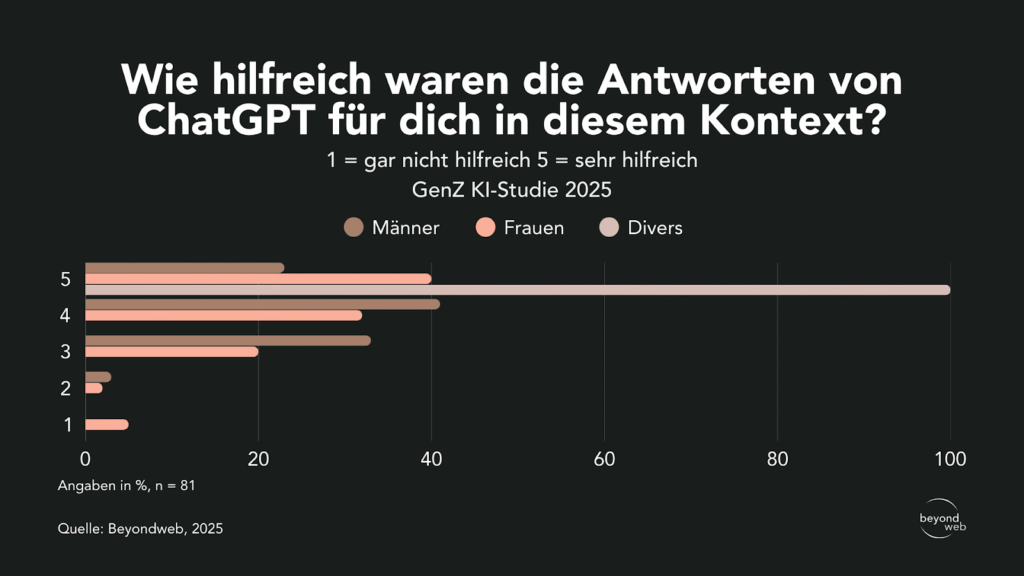

When broken down by gender, 41% of men rated the answers as a 4, compared to 32% of women.

The highest rating of 5 was given by 23% of men and 40% of women.

Among those who identified as non-binary, 100% gave the highest rating of 5.

An average rating of 3 was given by 33% of men and 20% of women.

2% of men and 2% of women gave a rating of 3.

5% of women gave the lowest rating (1), while no men or people who identified as non-binary selected this option.

Antworten unterteilt nach dem Geschlecht auf die Frage “Wie hilfreich waren die Antworten von ChatGPT für dich in diesem Kontext? (1 = gar nicht hilfreich, 5 = sehr hilfreich)”

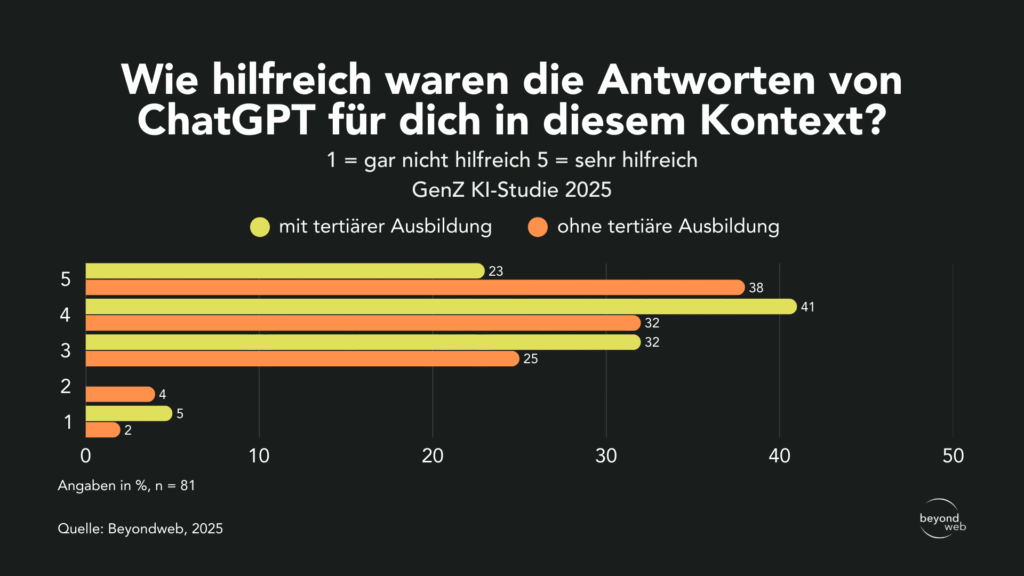

Unterteilt nach Bildungsstand zeigt sich, dass 41 % der Befragten mit tertiärer Ausbildung die Antworten von ChatGPT mit 4 bewerteten, während es bei den Nichtakademiker:innen 32 % waren.

The highest rating of 5 was given by 38% of those without a college degree and 23% of respondents with a college degree.

An average rating of 3 was given by 32% of college graduates and 25% of non-college graduates.

Two people (4%) without a college degree gave a rating, while no one with a college degree did.

The lowest rating (1) was given by 5% of college graduates and 2% of those without a college degree.

Responses broken down by educational level to the question “How helpful were ChatGPT’s answers to you in this context? (1 = not at all helpful; 5 = very helpful)”

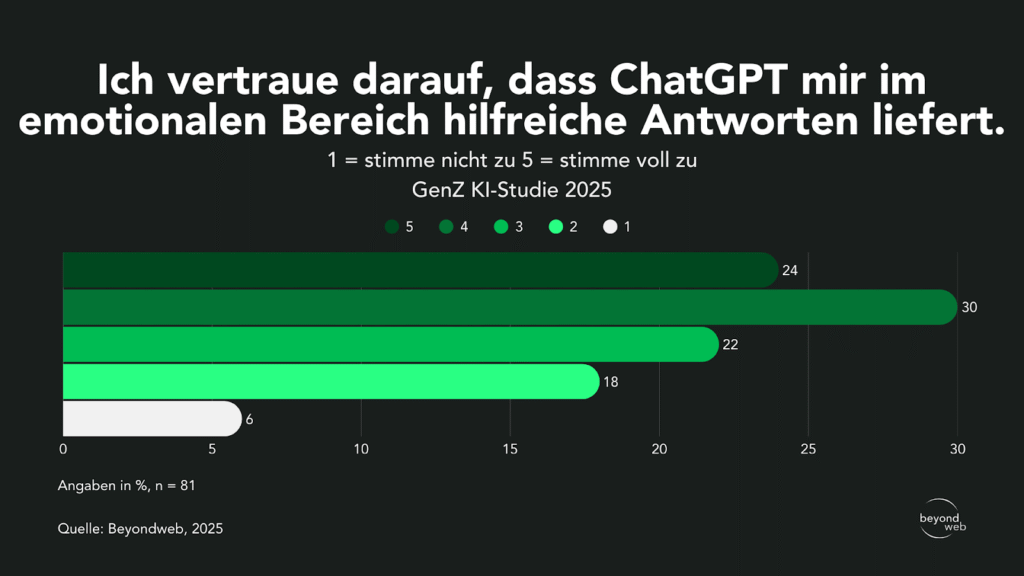

Confidence in ChatGPT's assistance

According to our study results, 30% of respondents believe that ChatGPT provides helpful answers in emotional matters (4), and 24% even strongly agree with this (5).

22% expressed moderate agreement (3).

Eighteen percent expressed less confidence with a rating of 2, while 6% said they strongly disagreed (1).

Antworten aller Befragten auf die Frage: «Ich vertraue darauf, dass ChatGPT mir im emotionalen Bereich hilfreiche Antworten liefert. (1 = stimme nicht zu 5 = stimme voll zu)”

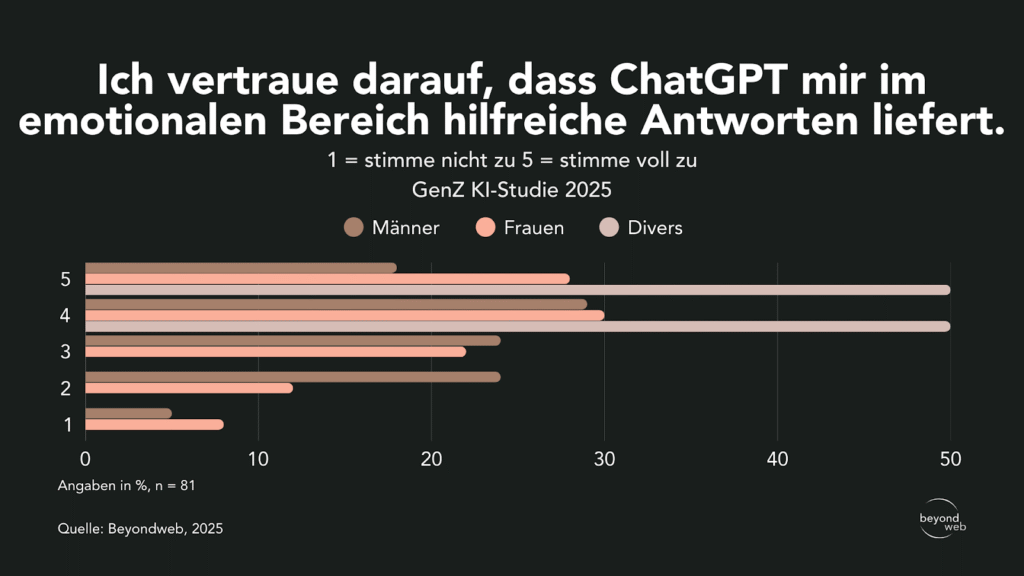

When broken down by gender, 29% of men, 30% of women, and 50% of people who identify as non-binary answered "4."

The highest rating of 5 was given by 18% of men, 28% of women, and 50% of people who identify as non-binary.

24% of men and 22% of women gave a rating of 3.

24% of men and 12% of women gave a rating of 2.

The lowest rating (1) was given by 5% of men and 8% of women.

Responses broken down by gender to the question “I trust that ChatGPT will provide me with helpful answers regarding emotional issues. (1 = strongly disagree; 5 = strongly agree)”

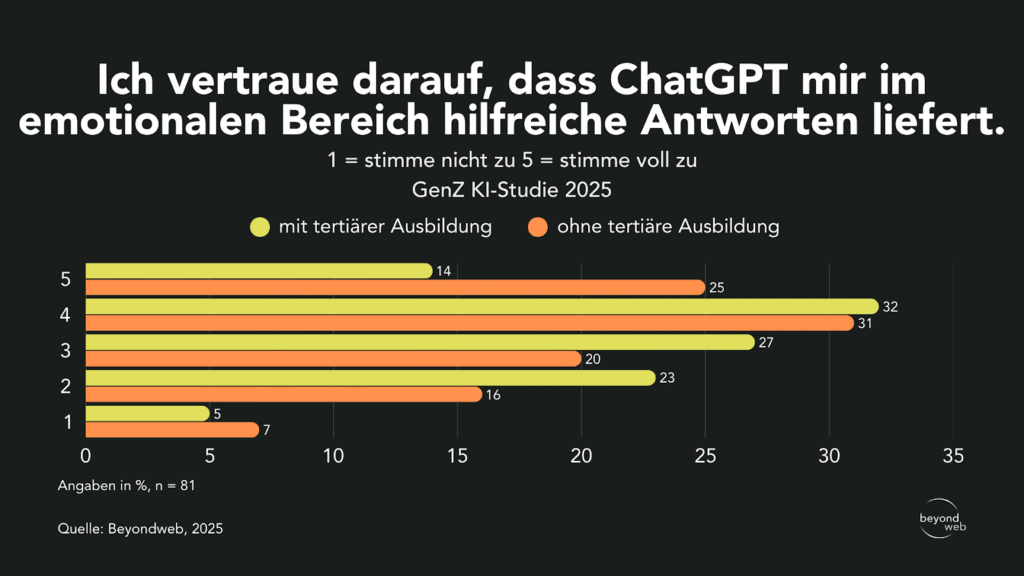

When broken down by educational background, the following differences emerge: 32% of respondents with a tertiary education and 31% without a higher education degree gave a rating of 4.

Mit 5 stimmten 14 % der Akademiker:innen und 25 % der Nicht-Akademiker:innen voll zu.

An average rating of 3 was given by 27% of those with a college education and 20% of those without.

23% of college graduates and 16% of non-college graduates gave a rating of 2.

The lowest rating (1) was given by 5% of those with a college degree and 7% of those without.

Antworten unterteilt nach dem Bildungsstand auf die Frage “Ich vertraue darauf, dass ChatGPT mir im emotionalen Bereich hilfreiche Antworten liefert. (1 = stimme nicht zu, 5 = stimme voll zu)”

Perceived anonymity when communicating with ChatGPT

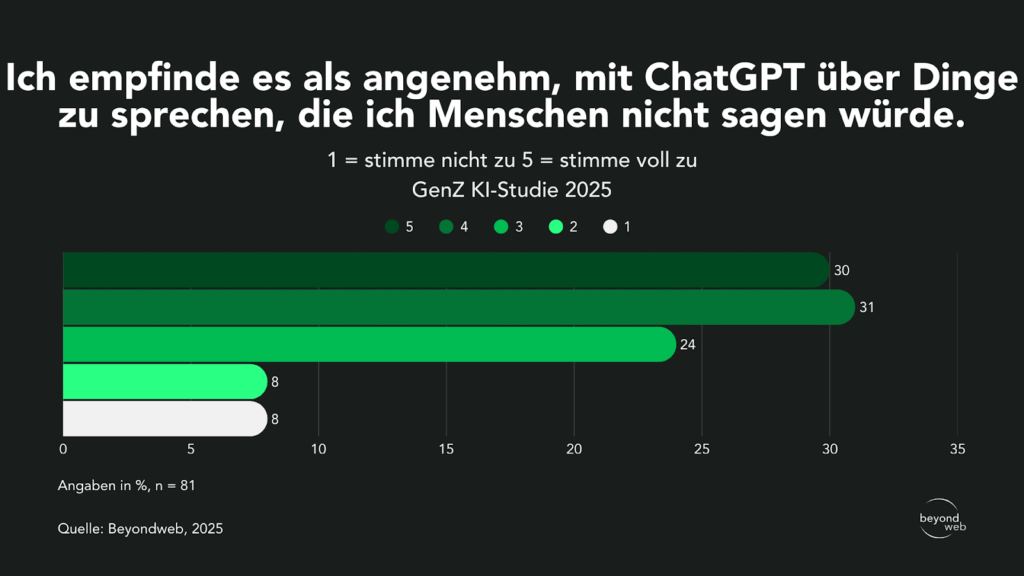

31% of respondents find it comfortable (rating of 4) to talk to ChatGPT about things they wouldn’t tell other people.

30% even strongly agree with this statement (5).

24% expressed moderate agreement (3).

Only 8% said they strongly disagreed with the statement (1), and another 8% said they somewhat disagreed (2).

Antworten aller Befragten auf die Frage: «Ich empfinde es als angenehm, mit ChatGPT über Dinge zu sprechen, die ich Menschen nicht sagen würde. (1 = stimme nicht zu 5 = stimme voll zu)”

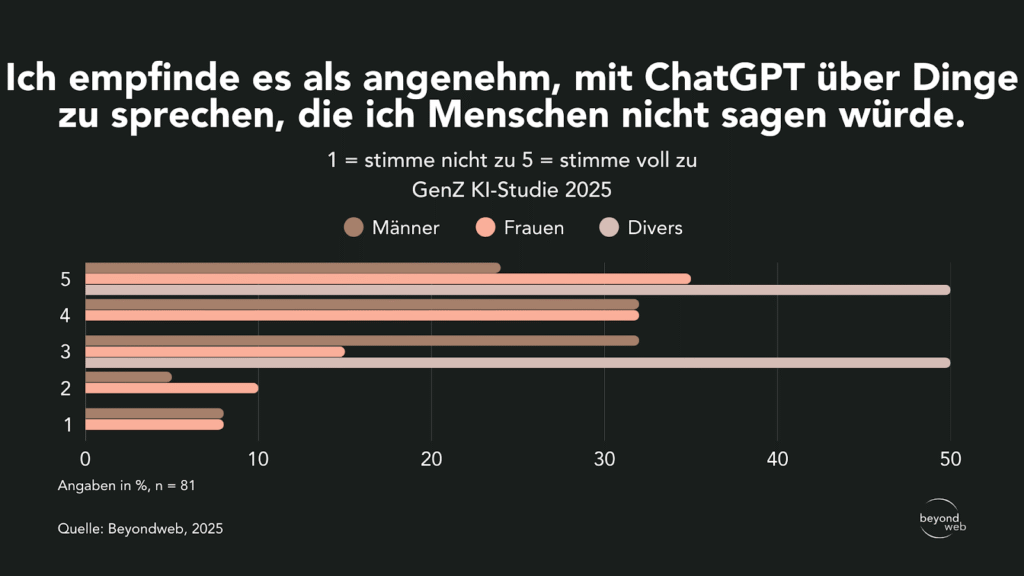

When broken down by gender, 32% of men and 32% of women rated the statement a 4.

24% of men, 35% of women, and 50% of people who identify as non-binary strongly agreed.

An average rating of 3 was given by 32% of men, 15% of women, and 50% of people who identify as diverse.

2% of men and 10% of women gave a rating of 5.

The lowest rating (1) was given by 8% of men and 8% of women; no person identifying as non-binary selected this option.

Antworten, unterteilt nach dem Geschlecht, auf die Frage: «Ich empfinde es als angenehm, mit ChatGPT über Dinge zu sprechen, die ich Menschen nicht sagen würde. (1 = stimme nicht zu, 5 = stimme voll zu)»

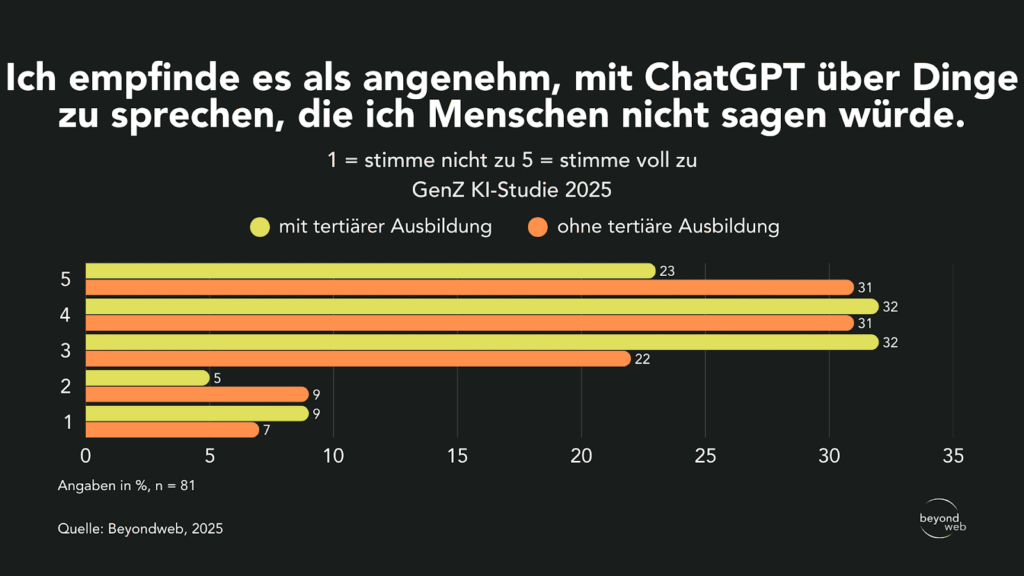

When broken down by educational background, it appears that 32% of respondents with a tertiary degree and 22% without a higher education degree rated the statement a 3.

Mit 4 stimmten 32 % der Akademiker:innen und 31 % der Nichtakademiker:innen zu.

The highest rating of 5 was given by 23% of those with a tertiary education and 31% of those without a tertiary degree.

With 2, 5% of college graduates and 9% of non-college graduates were rated.

The lowest rating (1) was given by 9% of people with a college degree and 7% of those without.

Antworten, unterteilt nach dem Bildungsstand, auf die Frage “Ich empfinde es als angenehm, mit ChatGPT über Dinge zu sprechen, die ich Menschen nicht sagen würde. (1 = stimme nicht zu, 5 = stimme voll zu)”

Perceived neutrality of ChatGPT compared to humans

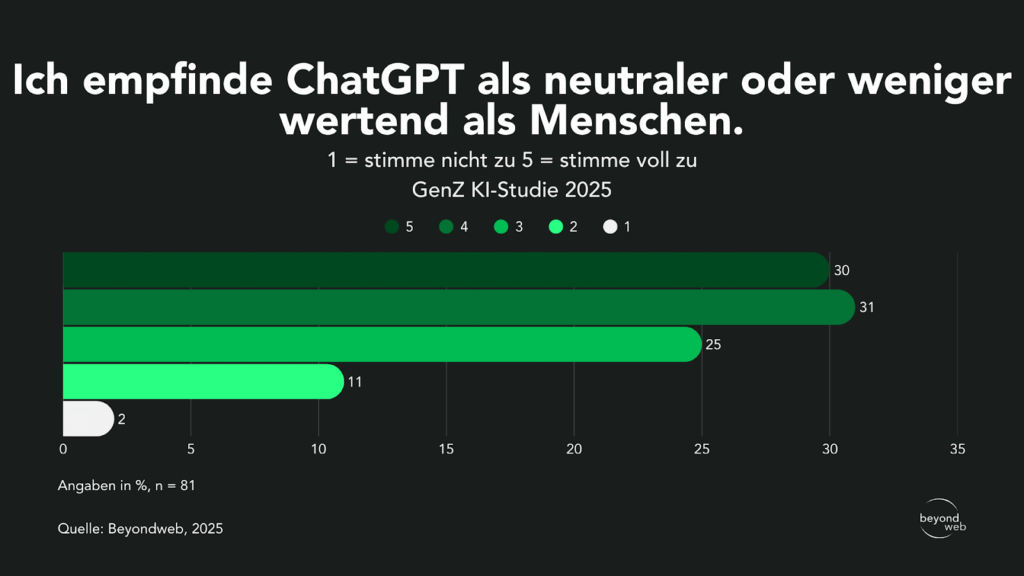

31% of respondents perceive ChatGPT as more neutral or less judgmental and rated it a 4.

30% strongly agreed with this statement (5).

25% expressed moderate agreement (3).

Eleven percent expressed a lower level of agreement, giving a score of 2, while only 2% of respondents said they strongly disagreed (1).

Antworten aller Befragten auf die Frage “Ich empfinde ChatGPT als neutraler oder weniger wertend als Menschen. (1 = stimme nicht zu, 5 = stimme voll zu)”

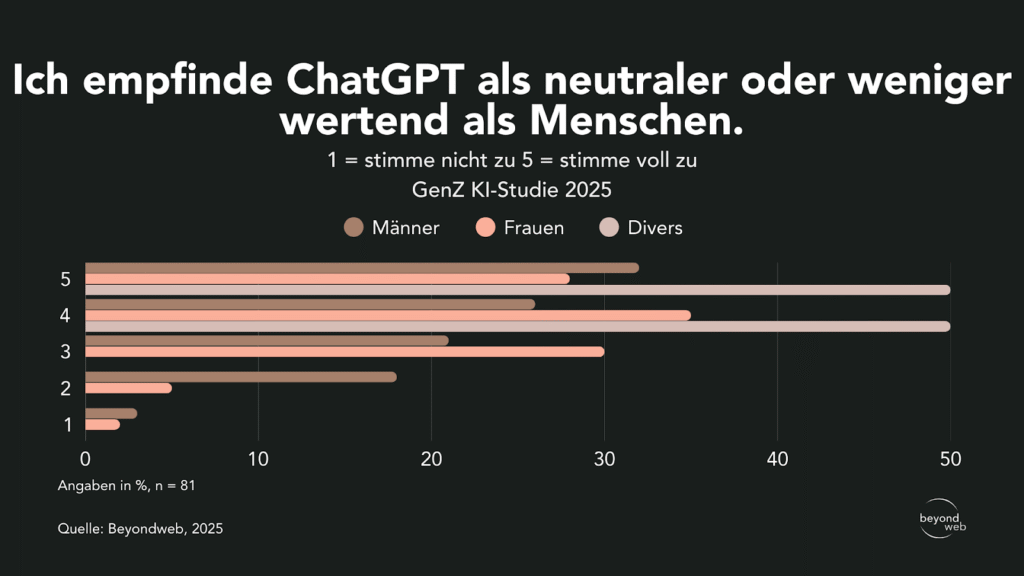

When broken down by gender, 26% of men, 35% of women, and 50% of people who identify as non-binary rated the statement a 4.

The highest rating of 5 was given by 32% of men, 28% of women, and 50% of people who identify as non-binary.

The rating of 3 was given by 21% of men and 30% of women.

18% of men and 5% of women gave a rating of 2.

The lowest rating (1) was given by 3% of men and 2% of women; no person identifying as non-binary selected this option.

Antworten unterteilt nach dem Geschlecht auf die Frage “Ich empfinde ChatGPT als neutraler oder weniger wertend als Menschen. (1 = stimme nicht zu, 5 = stimme voll zu)”

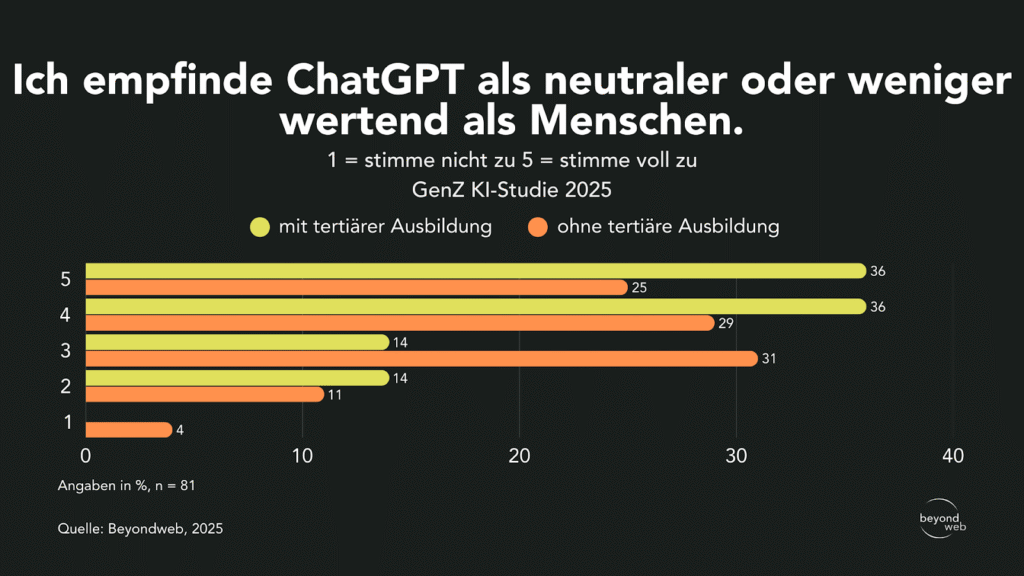

When broken down by educational background, 36% of respondents with a tertiary degree and 29% without a higher education degree rated the statement a 4.

Mit 5 stimmten 36 % der Akademiker:innen und 25 % der Nichtakademiker:innen voll zu.

The rating of 3 was given by 14% of respondents with a college degree and 31% of those without one.

With a rating of 2, 14% of college graduates and 11% of non-college graduates.

The lowest approval rating (1) was given by 4% of respondents without a college education; this figure was not reported for those with a college education.

Antworten, unterteilt nach dem Bildungsstand, auf die Frage “Ich empfinde ChatGPT als neutraler oder weniger wertend als Menschen. (1 = stimme nicht zu, 5 = stimme voll zu)”

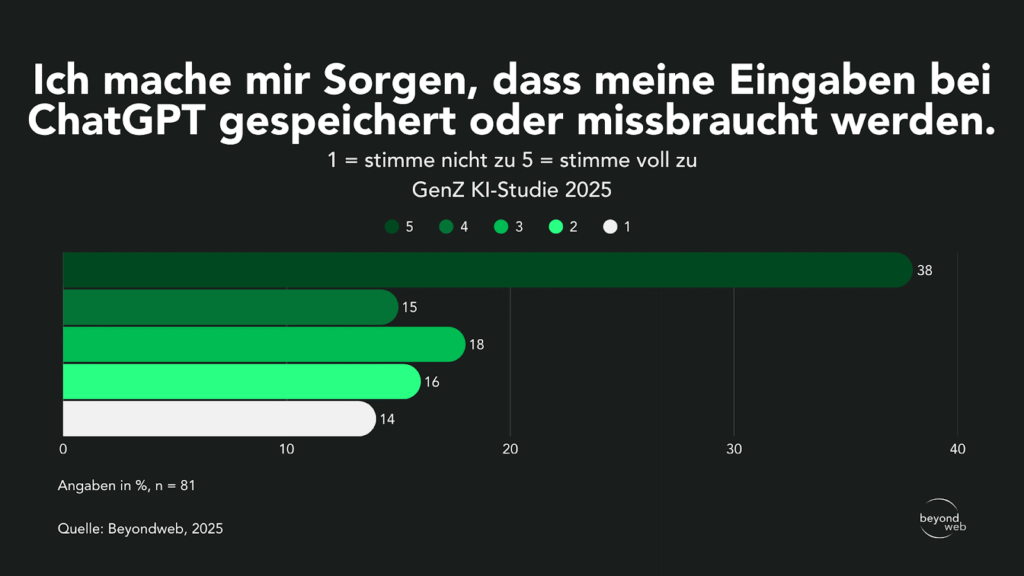

Assessment of Privacy Concerns Regarding the Use of ChatGPT

According to our survey results, 38% of respondents are very concerned that their input might be stored or misused by ChatGPT (5 = strongly agree).

15% gave a rating of 4 and are quite concerned.

18% expressed moderate concern (3), 16% indicated low concern (2), and only 14% of respondents expressed no concern at all (1).

Antworten aller Befragten auf die Frage: «Ich mache mir Sorgen, dass meine Eingaben bei ChatGPT gespeichert oder missbraucht werden. (1 = stimme nicht zu, 5 = stimme voll zu)»

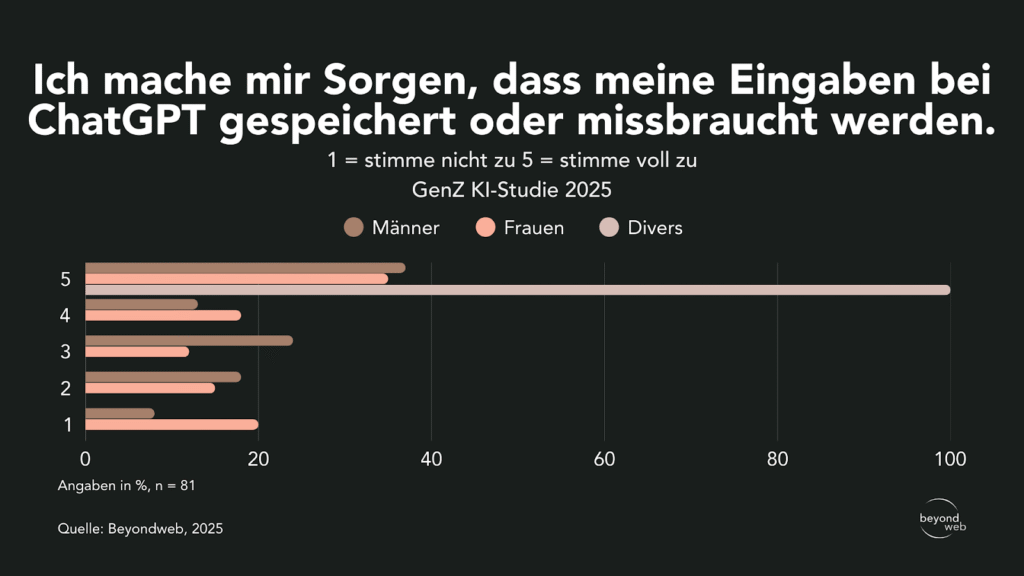

When broken down by gender, 37% of men and 35% of women gave a rating of 5.

Among people who identify as non-binary, this proportion is 100%.

13% of men and 18% of women gave a rating of 4.

3 was chosen by 24% of men and 12% of women.

18% of men and 15% of women expressed low agreement (2).

Eight percent of men and 20% of women had absolutely no worries.

Antworten unterteilt nach dem Geschlecht auf die Frage “Ich mache mir Sorgen, dass meine Eingaben bei ChatGPT gespeichert oder missbraucht werden. (1 = stimme nicht zu, 5 = stimme voll zu)”

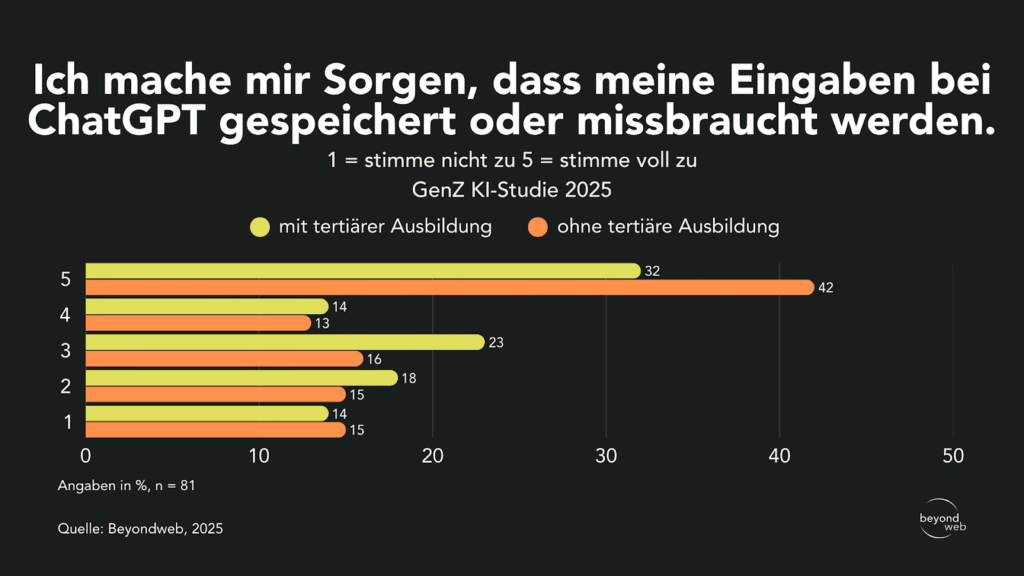

Unterteilt nach Ausbildung zeigen sich Unterschiede: 42 % der Befragten ohne tertiären Abschluss und 32 % mit tertärem Abschluss gaben an, sich grosse Sorgen zu machen (5).

With a rating of 4, 14% of college graduates and 13% of non-college graduates.

3 was chosen by 23% of those with a college degree and 16% of those without.

18% of college graduates and 15% of non-college graduates expressed a low level of agreement (2).

14% of respondents with a college degree and 15% without one have no concerns (1).

Antworten unterteilt nach dem Bildungsstand auf die Frage “Ich mache mir Sorgen, dass meine Eingaben bei ChatGPT gespeichert oder missbraucht werden. (1 = stimme nicht zu, 5 = stimme voll zu)”

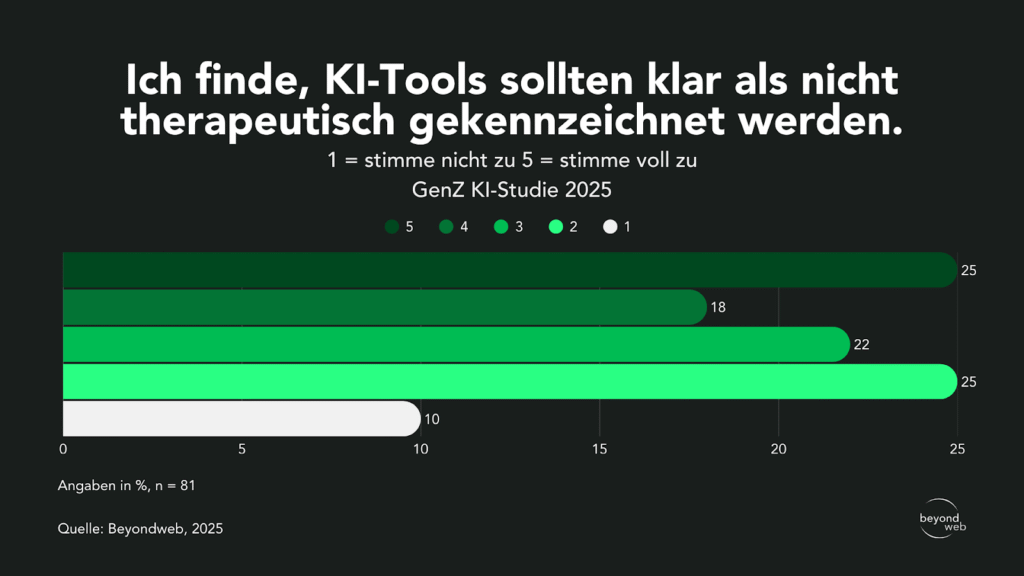

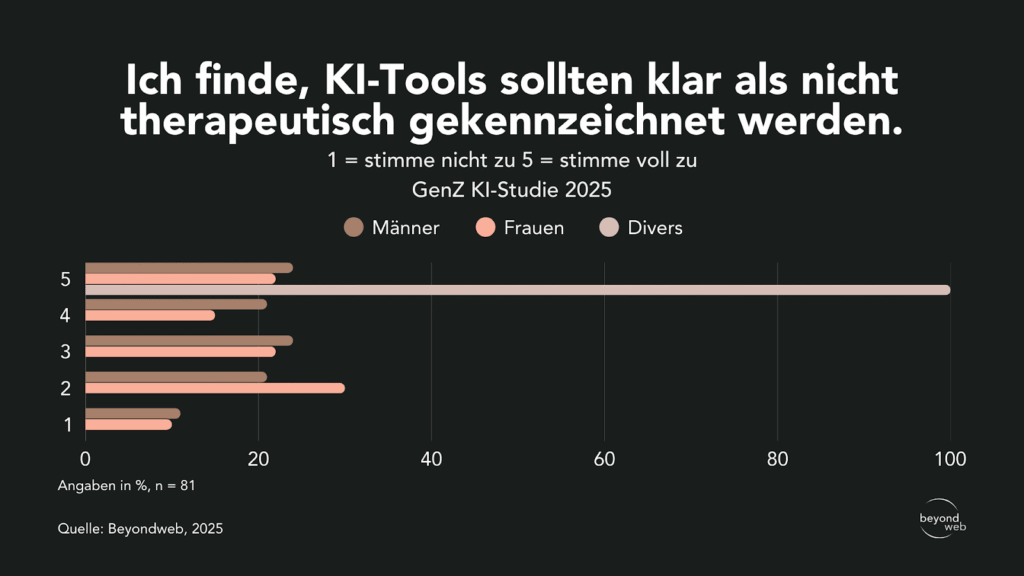

Assessment of the labeling of AI tools as non-therapeutic

Laut unseren Studienresultaten sind 25 % der Befragten der Meinung, dass KI-Tools klar als nicht therapeutisch gekennzeichnet werden sollten, und stimmten dem voll zu (5).

18% somewhat agreed (4) and 22% expressed moderate agreement (3).

25% said they tended to disagree (2).

Only 10% completely disagreed with this statement (1).

Antworten aller Befragten auf die Frage: «Ich finde, KI-Tools sollten klar als nicht therapeutisch gekennzeichnet werden. (1 = stimme nicht zu, 5 = stimme voll zu)»

When broken down by gender, 24% of men and 22% of women strongly agree with the statement (5).

Among people who identify as non-binary, this proportion is 100%.

21% of men and 15% of women somewhat agreed.

Option 3 was chosen by 24% of men and 21% of women.

21% of men and 30% of women tended to disagree (2).

11% of men and 10% of women completely rejected it.

Antworten unterteilt nach dem Geschlecht auf die Frage: «Ich finde, KI-Tools sollten klar als nicht therapeutisch gekennzeichnet werden. (1 = stimme nicht zu, 5 = stimme voll zu)»

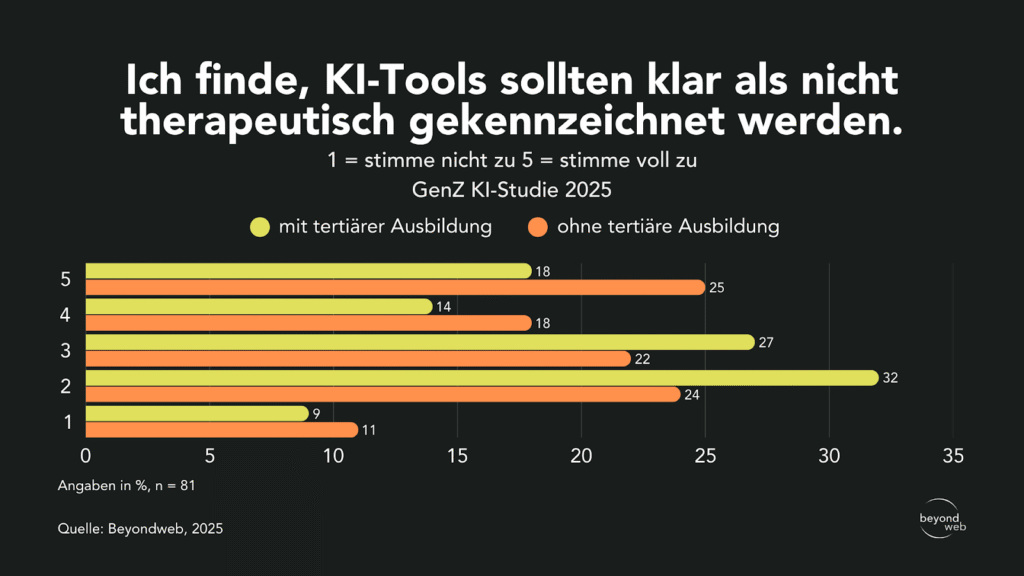

Unterteilt nach Ausbildung zeigt sich, dass 25 % der Befragten ohne tertiären Abschluss und 18 % mit tertärem Abschluss der Aussage voll zustimmen (5).

Of those surveyed, 18% did not have a college degree, and 14% did.

Option 3 was chosen by 27% of those with a college degree and 22% of those without a college degree.

32% of college graduates and 24% of non-college graduates tended to disagree (2).

9% of college graduates and 11% of respondents without a college degree completely rejected the statement (1).

Antworten unterteilt nach dem Bildungsstand auf die Frage “Ich finde, KI-Tools sollten klar als nicht therapeutisch gekennzeichnet werden. (1 = stimme nicht zu, 5 = stimme voll zu)”

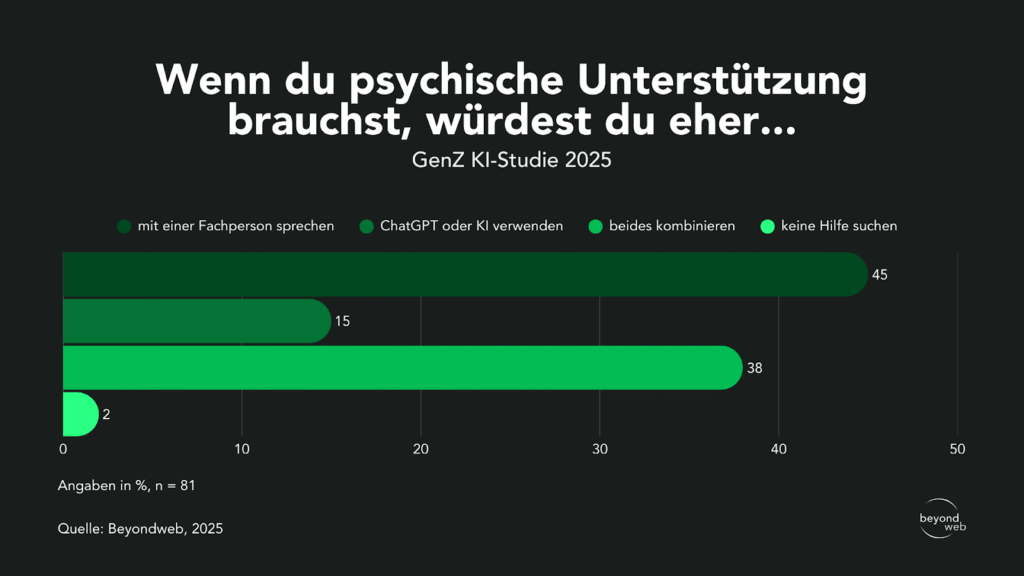

Preferences When Choosing Mental Health Support

According to the results of our study, 45% of respondents would be most likely to turn to a professional for mental health support.

38% said they wanted to combine both AI tools and a specialist.

15% would use only ChatGPT or another AI tool, while 2% said they did not want to use any assistance.

Responses from all respondents to the question “If you needed mental health support, would you be more likely to: talk to a professional / use ChatGPT or AI / combine both / not seek help?”

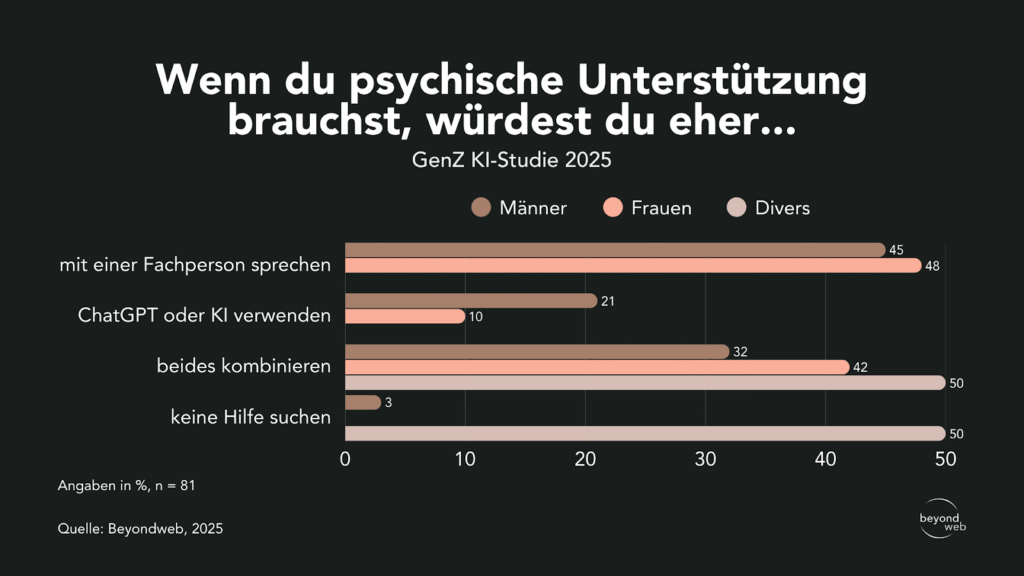

When broken down by gender, 45% of men and 48% of women report that they consult a professional.

32% of men, 42% of women, and 50% of people who identify as non-binary would prefer a combination of a professional and AI.

21% of men and 10% of women would use only AI tools.

3% of men, 0% of women, and 50% of people who identify as non-binary reported that they do not seek help.

Responses broken down by gender to the question “If you needed mental health support, would you be more likely to: talk to a professional / use ChatGPT or AI / combine both / not seek help?”

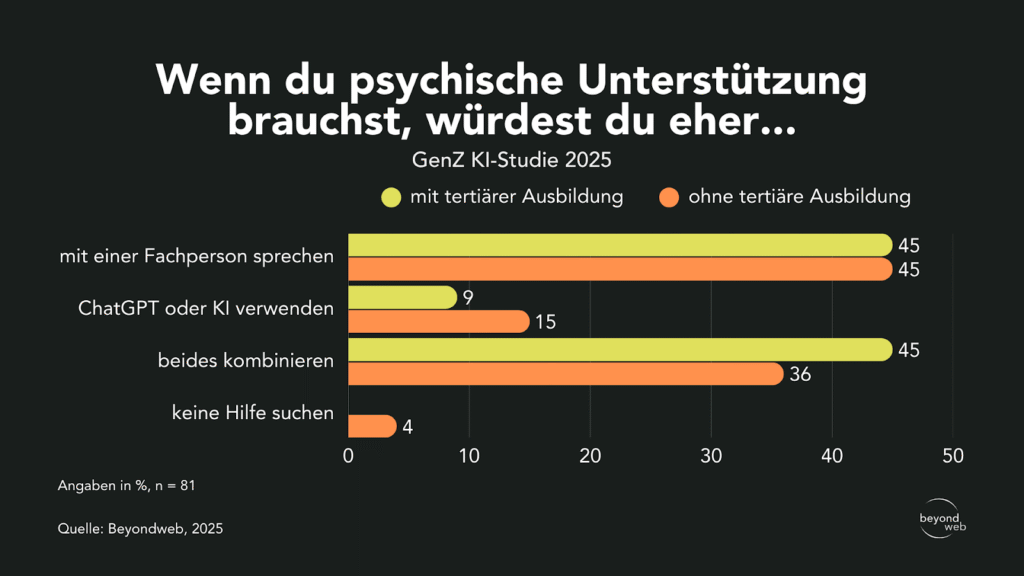

When broken down by educational background, the results show that 45% of respondents with a tertiary degree and 45% of those without a higher education degree are most likely to choose a specialist.

A combination of a professional and AI is preferred by 36% of those without a college degree and by 45% of those with a college degree.

15% of people without a college degree and 9% of college graduates would use only AI tools.

4% of respondents without a college degree said they did not seek help, while none of the college graduates chose this option.

Responses broken down by educational level to the question “If you needed mental health support, would you be more likely to: talk to a professional / use ChatGPT or AI / combine both / not seek help?”

Reasons for or against seeing a mental health professional when facing mental health issues

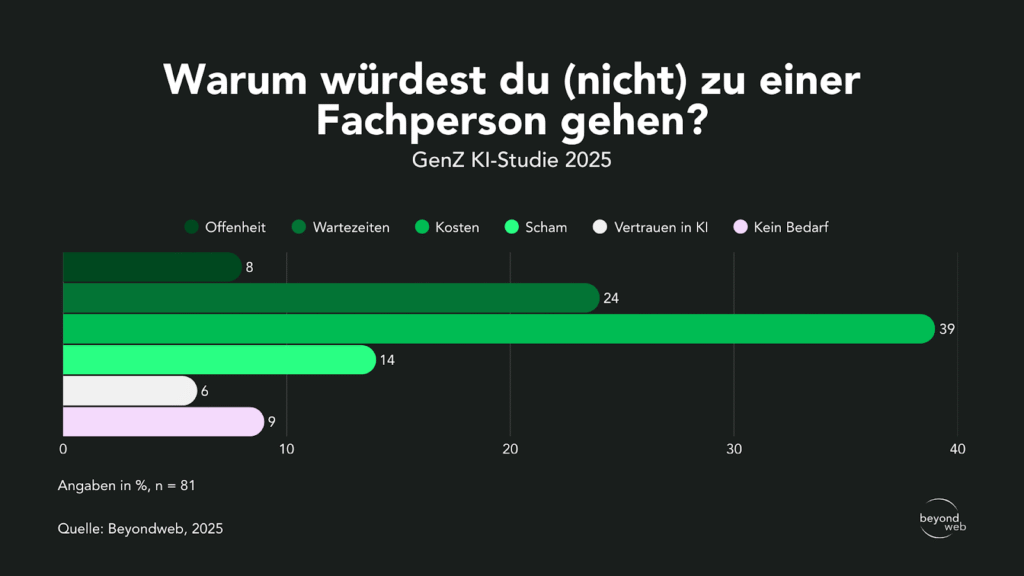

According to our study results, 39% of respondents say that cost is the main reason they do not seek professional help.

24% cite waiting times as a barrier, and 14% cite feelings of embarrassment.

9 % sehen keinen Bedarf und 6 % haben kein Vertrauen in KI-Tools, weshalb sie eher nicht zu einer Fachperson gehen würden.

Only 8% cited openness as the reason.

Responses from all respondents to the question “Why would you (not) see a professional?”

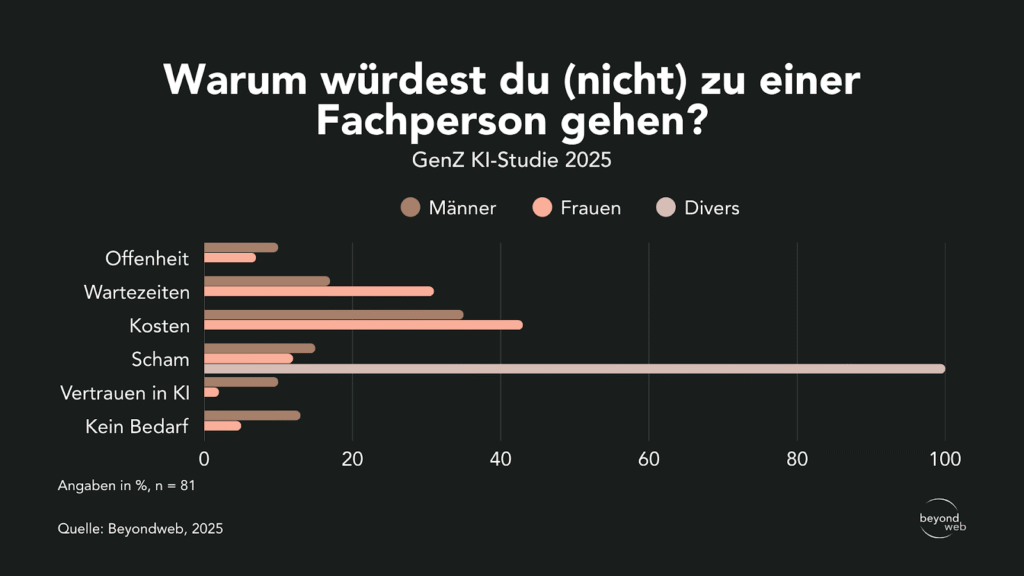

When broken down by gender, 35% of men and 43% of women cited cost as the main obstacle.

31% of women and 17% of men cited waiting times.

15% of men and 12% of women cited embarrassment as the reason.

13% of men and 5% of women said they saw no need for it.

10% of men and 2% of women rely on AI tools instead.

10% of men and 7% of women cited openness.

Among those who identified as non-binary, 100% cited shame as the reason.

Antworten, unterteilt nach dem Geschlecht, auf die Frage “Warum würdest du (nicht) zu einer Fachperson gehen?”

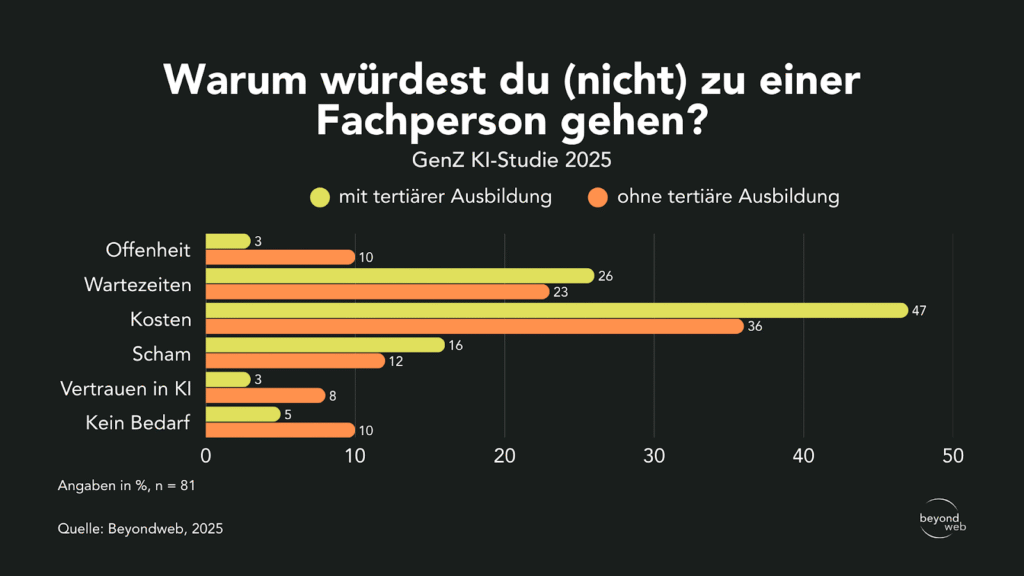

When broken down by educational background, 47% of respondents with a college degree and 36% without a college degree cited cost as the main reason.

26 % der Akademiker:innen und 23 % der Nichtakademiker:innen gaben Wartezeiten an.

16% of respondents with a college education and 12% without one cited shame.

5 % der Akademiker:innen und 10 % der Nichtakademiker:innen gaben an, keinen Bedarf zu haben.

8 % der Nichtakademiker:innen und 3 % der Akademiker:innen nannten Vertrauen in KI.

3 % der Akademiker:innen und 10 % der Nichtakademiker:innen nannten Offenheit.

Antworten, unterteilt nach dem Bildungsstand, auf die Frage “Warum würdest du (nicht) zu einer Fachperson gehen?”

Interpretation of the results

The results of this qualitative survey shed new light on the role that artificial intelligence (AI) plays in the psychological and mental well-being of Generation Z in Switzerland.

In this context, the use of AI tools such as ChatGPT is not merely a marginal phenomenon, but is already widespread.

The following section examines these key findings in greater detail and relates them to social trends and the insights of an expert.

AI as an alternative source of emotional support

Nearly half (49%) of those surveyed have already used AI tools to talk about personal or emotional issues.

This finding demonstrates that Gen Z in Switzerland is highly open to interacting with non-human counterparts in sensitive areas.

It is particularly striking that most of the areas of application mentioned relate to decision-making (23%) and relationship issues (18%)—that is, areas that require both cognitive-rational deliberation and emotional reflection.

This illustrates that AI is being used not only as a source of information, but increasingly as an accessible companion for everyday questions and emotional stress.

This trend is consistent with international findings.

Eine gross angelegte Befragung unter Jugendlichen in den USA zeigt, dass rund 70 % bereits sogenannte «AI-Companions» genutzt haben; etwa ein Drittel greift sogar regelmässig darauf zurück (AP News, 2023).

According to the young people themselves, the main reasons for using chatbots are their round-the-clock availability and the fact that they do not judge or stigmatize users.

This creates a space where feelings can be expressed without fear of social repercussions.

A YouGov survey shows similar results, with over 55% of 18- to 29-year-olds reporting that they feel comfortable discussing mental health concerns with AI chatbots (Axios, 2025).

Although this empathy is generated by a computer, young users apparently perceive it as genuine, which leads to greater trust in the interaction.

In addition to these quantitative findings, a qualitative study provides further evidence of the benefits of AI in emotional contexts.

In interviews with 19 people, the respondents described their conversations with generative AI chatbots as helpful when dealing with issues such as relationship problems or even trauma recovery.

Mehrere Teilnehmende berichteten, dass sie die Interaktion als «emotional sanctuary» empfanden, also als eine Art geschützten Rückzugsraum, in dem sie sich öffnen konnten, ohne bewertet zu werden (Nature, 2024).

These descriptions suggest that AI systems can fulfill not only rational and informational functions but also psychosocial functions that were previously the exclusive domain of human interactions.

In addition to these studies, which primarily highlight preferences regarding the use of AI in an emotional context, there is also clear evidence that AI actually works.

A systematic meta-analysis of chatbot-based interventions for adolescents and young adults concluded that these programs have a significant effect on reducing psychological distress (Hedges’ g ≈ –0.28).

Damit konnte gezeigt werden, dass Chatbots nicht nur kurzfristige Entlastung bieten, sondern messbar zur Verbesserung des Wohlbefindens beitragen können (PMC, 2024).

What is particularly interesting is that the effects are most pronounced in cases of mild to moderate psychological distress, suggesting that AI tools have great potential, especially when used for prevention and support.

At the same time, accountability remains a key issue: while it is clearly defined in psychotherapy, AI applications in the healthcare context require clear governance and human oversight.

Gender gap in usage, but not in preference

The study's findings confirm a significant gender gap in the overall use of ChatGPT:

Men (52%) use the tool more frequently on a daily basis than women (26%), a trend that is also reflected in earlier studies on technology adoption.

It is noteworthy, however, that these gender-based differences disappear when it comes to specific preferences regarding psychological support.

When asked whether they would be most likely to consult a specialist, men (45%) and women (48%) are almost neck and neck.

The combination of an expert and AI is also mentioned with similar frequency by both genders (32% of men, 42% of women).

This suggests that, despite generally using AI less frequently, women are certainly willing to use AI in an emotional context when it comes to matters that affect their own lives.

This discrepancy between general usage and situational openness warrants further study.

Dr. phil. Hannah Süss, Lead Psychologist, Board Member of the Zurich Association of Psychologists (ZüPP), and Co-President of the Steering Committee of the Swiss Professional Council for Psychotherapy (FSP), comments on these findings:

“In principle, it’s understandable that people want to express their thoughts and feelings in a safe environment. AI systems can provide an accessible space for this.”

At the same time, she warns:

“However, ChatGPT is not a psychotherapist, but rather a language model without consciousness, clinical responsibility, or the ability to form personal relationships. While you can share personal problems with it, you should always be aware that AI cannot provide a sound psychological diagnosis or treatment.”

Süss nevertheless highlights potential opportunities:

AI can be helpful in everyday areas such as psychoeducation, stress management, or motivation; she views this role as “supplementary tools in psychotherapy, provided they are used in a controlled and responsible manner, ” not as a substitute for professional help.

At the same time, she identifies clear risks:

“A key risk lies in pseudo-authenticity. ChatGPT conveys empathy and understanding through language, but it lacks its own experiences or clinical judgment.”

This could lead to misinformation, inappropriate advice, and a lack of crisis intervention; furthermore, the absence of the legal and ethical responsibilities that psychotherapists bear is highly problematic,“especially in acute mental health crises.”

This assessment of opportunities and risks is clearly supported by current scientific and journalistic sources:

A study published in *Psychiatric Services* shows that chatbots respond inconsistently to indirect but potentially dangerous inquiries about suicide and are unable to provide reliable crisis intervention.

Here is a literal example: “AI has no duty to intervene in crises” (AP News, 2025).

A report about a teenager who took his own life following problematic interactions with a chatbot—and whose family is now suing—also underscores the risk:

AI security systems can fail during prolonged interactions.

Furthermore, another case report serves as a stark warning about “AI psychosis”: A man entered a manic phase because ChatGPT reinforced his delusional beliefs rather than countering them.

Even in the face of obvious mental instability, the AI responded with supportive reinforcement rather than interruption, which exacerbated his mental crisis.

These cases show that the easily accessible space offered by AI can be a double-edged sword:

On the one hand, it facilitates access to a non-judgmental space of emotional openness; on the other hand, it carries significant risks if there is a lack of genuine therapeutic training or crisis intervention skills.

Financial barriers and long wait times are driving the adoption of AI

The main reasons preventing Gen Z individuals from seeing a healthcare professional are cost (39%) and wait times (24%).

In practice, these factors are very real and serious:

According to the Federation of Swiss Psychologists (FSP), only 16% of psychologists can schedule appointments within two weeks; for 32%, waiting times are at least four weeks; and 30% are currently not accepting new clients (Krucker, 2025).

At the same time, healthcare costs in Switzerland continue to rise:

According to the KOF forecast, healthcare spending is expected to rise to over 100 billion Swiss francs by 2025 and increase as a percentage of gross domestic product (GDP) from 11.8% (2023) to 12.1% (2025) (KOF, 2024).

This trend means that the cost of psychological treatment is becoming increasingly expensive, particularly due to high premiums, deductibles, and copayments.

In this context, the use of free, anonymous, and instantly available AI tools such as ChatGPT (especially in emotionally stressful situations) seems understandably appealing.

Dr. Hannah Süss describes this low-threshold accessibility as a “double-edged sword”:

On the one hand, it enables people who might otherwise not seek help to take that first important step.

On the other hand, there is a risk that this could delay people from seeking professional help because those affected might mistakenly feel that they are already receiving enough support.

In addition, there are gender-specific differences:

Women are more likely to cite cost (43%) and wait times (31%) as reasons for not seeing a healthcare professional, while men cited these barriers less frequently (35% and 17%, respectively).

This suggests that women are more aware of or affected by cost and access barriers and may therefore be more willing to consider low-threshold options, such as AI tools, as alternatives.

Overall, these findings suggest that structural gaps in access to psychological care are a driving factor behind the increasing use of AI in the emotional domain.

At the same time, they emphasize that while digital tools like ChatGPT significantly lower certain barriers, they must also be carefully integrated into the healthcare system to avoid delays in professional support.

High levels of trust and value, despite concerns

Respondents generally rate ChatGPT’s responses as very helpful (33%) or helpful (36%) in an emotional context, while trust in the AI is also high.

A total of 54% agree or strongly agree that they find ChatGPT's responses helpful.

One possible reason for this positive impression lies in the tool's perceived anonymity and neutrality:

61% of Gen Z respondents find it comfortable to talk to ChatGPT about things they wouldn’t tell people, and view it as more neutral and less judgmental than humans.

These assessments are also reflected in scientific research:

A systematic review states that conversational AI is often perceived as non-judgmental.

This perception increases both users' trust and their willingness to open up and explore personal issues (Cha, 2023).

Further studies show that anonymity and confidentiality facilitated by AI help build trust, particularly among people who feel inhibited or stigmatized when communicating in person (Khawaja & Bélisle-Pipon, 2023).

A systematic review of user reviews of mental health apps confirms this finding:

In online feedback, the “role of AI as an alternative or complementary tool to therapy” ranks as an important topic, indicating that many view AI tools as a competent, complementary support to traditional therapeutic settings (Shan, 2022).

Despite this high level of trust, there are significant privacy and security concerns:

38% of respondents are very concerned that their data could be stored or misused.

This apparent discrepancy—that is, a high level of trust and a sense of benefit alongside reservations—suggests a pragmatic assessment:

Gen Z recognizes the immediate benefits of anonymous, instant support and considers them more important than potential risks.

This ambivalent attitude is characteristic of a generation that uses digital tools critically and thoughtfully:

She appreciates the benefits, but remains aware of the limitations and risks.

AI as a supplement, not a replacement

Although 15% of respondents stated that they use AI tools exclusively for psychological support, the remaining results reveal a clear trend:

AI is primarily viewed as a supplement to traditional aid, not as a replacement.

Nearly 40% of respondents would already use a combination of AI and a professional, while 45% would still be most likely to turn to a professional.

This underscores the fact that Gen Z is aware of the limitations of technology in a therapeutic context; they use AI where it makes sense, while at the same time recognizing the importance of professional support.

International sources support this approach:

An article in Teen Vogue describes how teenagers use AI tools such as ChatGPT, Woebot, and Wysa as valuable supplements—whether to help with eating disorders or to maintain routines—but always emphasize that human support remains essential.

One of the teenagers sums it up: “As much as I like AI for some of those reasons, I also think it’s important to see therapists … You just need that one-on-one communication…” (Tarpley, 2025).

The Reuters report also highlights the role of AI as a practical stopgap solution, for example in regions with limited access to psychotherapy.

It serves as an accessible, albeit imperfect, source of support, but by no means as a permanent solution to the crisis.

Legal experts also warn against relying on AI in the long term as a substitute for human empathy, since AI lacks both the emotional depth and the therapeutic bond that only humans can provide (Richter, 2025).

These findings provide a solid foundation for the study's results:

Gen Z is open to AI as a supportive tool, but clearly recognizes the added value of professional human assistance.

This perspective is relevant to debates about the future of mental health care, particularly at a time when digital and humanistic forms of support should increasingly be integrated to create truly effective and ethically responsible services.

Closing words

The results of this study clearly show that artificial intelligence (AI) already plays a significant role in the mental and emotional lives of Generation Z in Switzerland.

Nearly half of the respondents have already used ChatGPT for personal or emotional matters.

This suggests that AI is perceived not only as a technical tool but also as an accessible point of contact.

What is particularly striking is the ambivalent attitude of the respondents:

On the one hand, most of them consider ChatGPT’s responses to be helpful and trustworthy, appreciate the tool’s anonymity and neutrality, and are increasingly using it in sensitive contexts.

On the other hand, there are significant concerns regarding data protection, accountability, and a lack of therapeutic expertise.

This tension between trust and skepticism is characteristic of a generation that openly embraces digital innovations while at the same time viewing them with a critical eye.

The results also show that structural factors such as high costs and long wait times in the Swiss healthcare system have a significant impact on the use of AI.

In this context, ChatGPT is seen as a practical tool that is always available.

Not as a substitute for professional help, but as a supplement to it.

This also confirms a clear international trend: AI is being used to overcome barriers, yet the importance of human guidance remains undisputed.

This presents a twofold challenge for academia, policymakers, and practitioners:

On the one hand, it is important to make responsible use of the opportunities offered by AI-based solutions by integrating them into existing healthcare systems.

On the other hand, the risks must be clearly addressed in order to ensure trust and security in the long term.

The study makes it clear:

Generation Z in Switzerland recognizes the potential of AI, but is also aware of its limitations.

This opens up a space for innovation in which digital and traditional forms of mental health support must be considered together.

This can pave the way for a modern, accessible, and responsible approach to mental health.

Bibliography

AP News (December 27, 2023): Teens turn to ‘AI companions’ for support, raising concerns among parents and experts https://apnews.com/article/ai-companion-generative-teens-mental-health-9ce59a2b250f3bd0187a717ffa2ad21f

AP News (May 10, 2025): Experts warn that AI chatbots sometimes fail to identify suicide risks https://apnews.com/article/ai-chatbots-selfharm-chatgpt-claude-gemini-da00880b1e1577ac332ab1752e41225b

Axios (March 23, 2025): This empathy chatbot just passed the Turing test for therapists https://www.axios.com/2025/03/23/empathy-chatbot-turing-therapist

Frontiers (2023): Artificial intelligence chatbots in mental health: A review of the evidence, Frontiers in Digital Health, 5, 1278186 https://www.frontiersin.org/journals/digital-health/articles/10.3389/fdgth.2023.1278186/full

KOF ETH Zurich (November 21, 2024): Health care spending rises to over 100 billion francs https://kof.ethz.ch/news-und-veranstaltungen/medien/medienmitteilungen/2024/11/gesundheitsausgaben-steigen-auf-ueber-100-mrd-franken.html

Nature (2024): Talking to machines: Generative AI chatbots as emotional companions, Mental Health Research, 3, Article 97https://www.nature.com/articles/s44184-024-00097-4

New York Post (August 29, 2025): Experts call for AI regulation as parents sue over teen suicides https://nypost.com/2025/08/29/tech/experts-call-for-ai-regulation-as-parents-sue-over-teen-suicides

Psychotext (2025): Study: Waiting Times for Psychologists in Switzerland https://www.psychotext.ch/studien/wartezeit-bei-psychologinnen

Reuters (August 23, 2025): “It saved my life”: People are turning to AI therapy https://www.reuters.com/lifestyle/it-saved-my-life-people-turning-ai-therapy-2025-08-23

Tarpley, J. (January 15, 2025): Teens are turning to AI therapy chatbots to cope with eating disorders https://www.teenvogue.com/story/ai-therapy-chatbot-eating-disorder-treatment

U.S. National Library of Medicine (2022): Conversational agents in mental health: Systematic review, JMIR Mental Health, 9(11), e9758643 https://pmc.ncbi.nlm.nih.gov/articles/PMC9758643

U.S. National Library of Medicine (2023): Efficacy of chatbot-based mental health interventions: A systematic review and meta-analysis, Journal of Medical Internet Research, 25, e10625083 https://pmc.ncbi.nlm.nih.gov/articles/PMC10625083

U.S. National Library of Medicine. (2024): Effectiveness of chatbot-based interventions for young adults: A meta-analysis, Psychiatric Services. https://pmc.ncbi.nlm.nih.gov/articles/PMC12261465/

Wall Street Journal (April 2, 2025): ChatGPT linked to manic episodes: Psychologists warn of “AI psychosis” https://www.wsj.com/tech/ai/chatgpt-chatbot-psychology-manic-episodes-57452d14

Appendix

Demographics of the respondents